APPS · March 2026

TongCLI: A CLI Tool for Your Terminal

TongCLI is a CLI tool that runs in your terminal, autonomously completing tasks described in natural language through programming

Introduction

TongCLI is a CLI tool that runs in your terminal, autonomously completing tasks described in natural language through programming.TongCLI is powered by Judy: an engine built on a multi-agent architecture, focused on enhancing the programming capabilities of CLI systems. Judy aims to become a core engine (harness) that can be reused in any Agentic system.

We believe that as Agent technology evolves,

We believe that as Agent technology evolves,

programming capabilities and multi-agent architecture will become indispensable features of all Agentic systems.Core Capabilities

Programming Capabilities

Breaking Through Preset Tool Limitations, Freely Interacting with the Environment

On one hand, there are still a large number of software/services that have not been wrapped as tools that Agents can call.

On the other hand, providing Agents with a large number of preset tools will fill the LLM's context with numerous tool definitions, wasting tokens and affecting LLM performance.

By equipping Agents with programming capabilities, only two minimal tools (code editing + code execution) are needed for Agents to freely use all software and services, breaking the constraints on their hands and feet when interacting with the real world, ensuring that tool limitations no longer become a bottleneck for Agent capabilities.

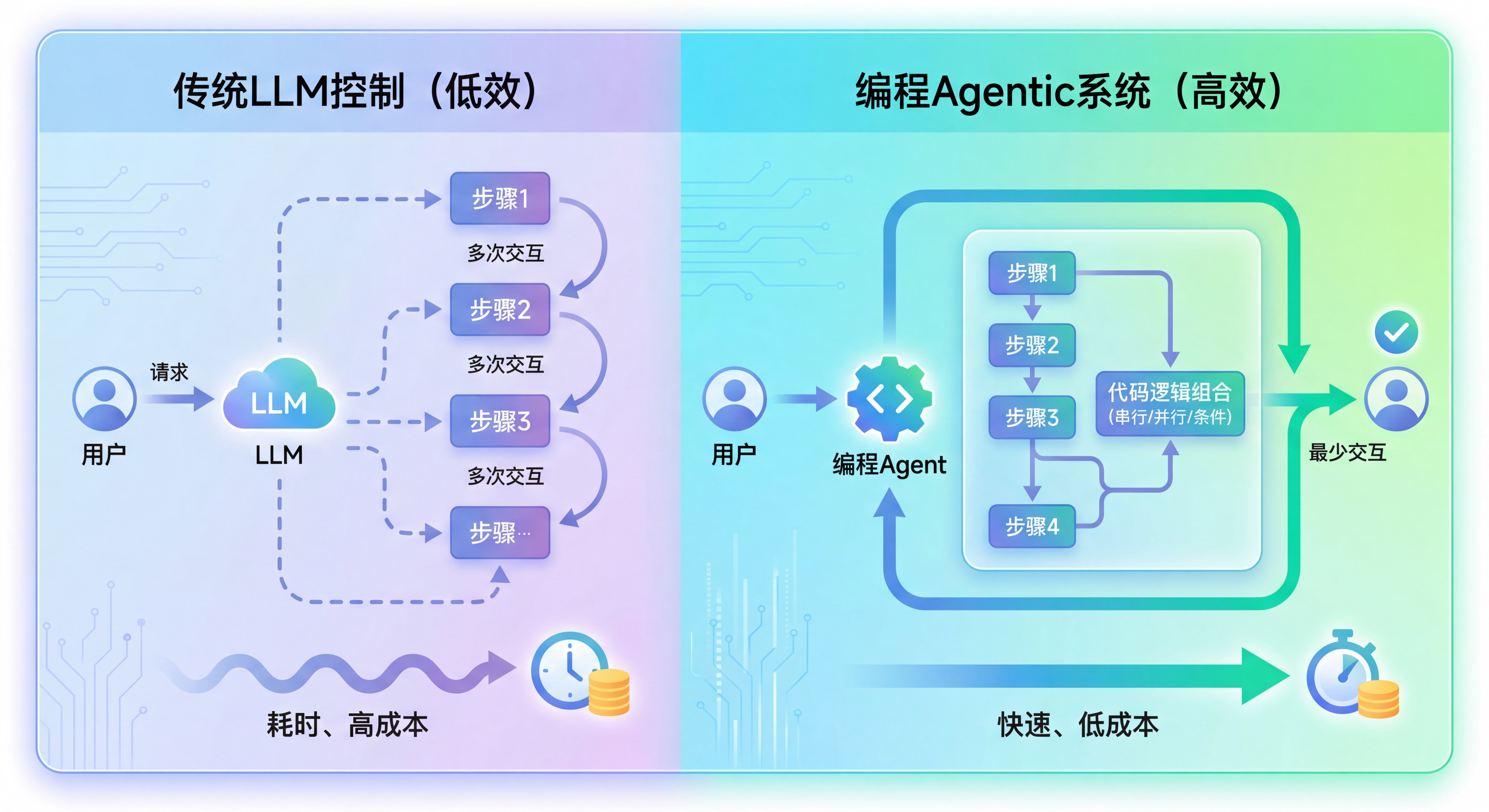

Running in the Most Efficient Way, Reducing Interaction Rounds

When multiple intermediate steps of a task need to be combined through deterministic logic rules such as serial, parallel, loop, or conditional branching.

Due to the lack of corresponding logic control and composition structures in Tool Calls, the LLM itself must act as the logic controller, unnecessarily increasing interaction rounds.

By leveraging the logic control structures inherent in programming languages, Agents can combine multiple steps through code, avoiding unnecessary LLM intervention between steps, reducing interaction rounds, and lowering time and computational costs.

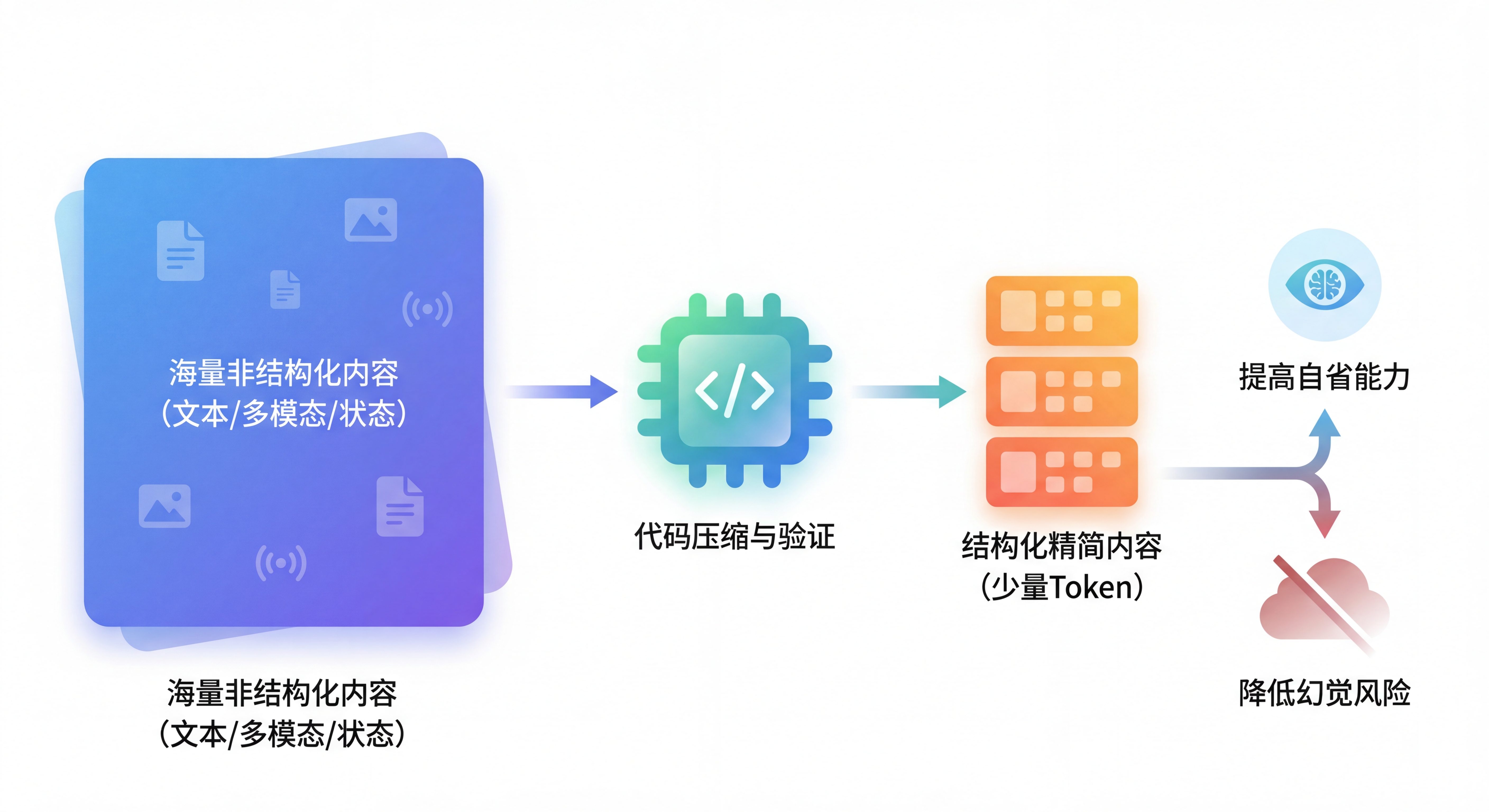

Improving Self-Reflection Capabilities, Reducing Hallucination Risks

By writing and running code, Agents can compress raw content (text, multimodal, environment state, etc.) that requires massive tokens into structured, concise content that can be represented with minimal tokens.

Deterministic, reproducible code scripts/automated validation scripts, combined with structured processing results/validation results, can improve the self-reflection capabilities of probability-based Agents, reducing the risks of blind confidence and hallucinations.

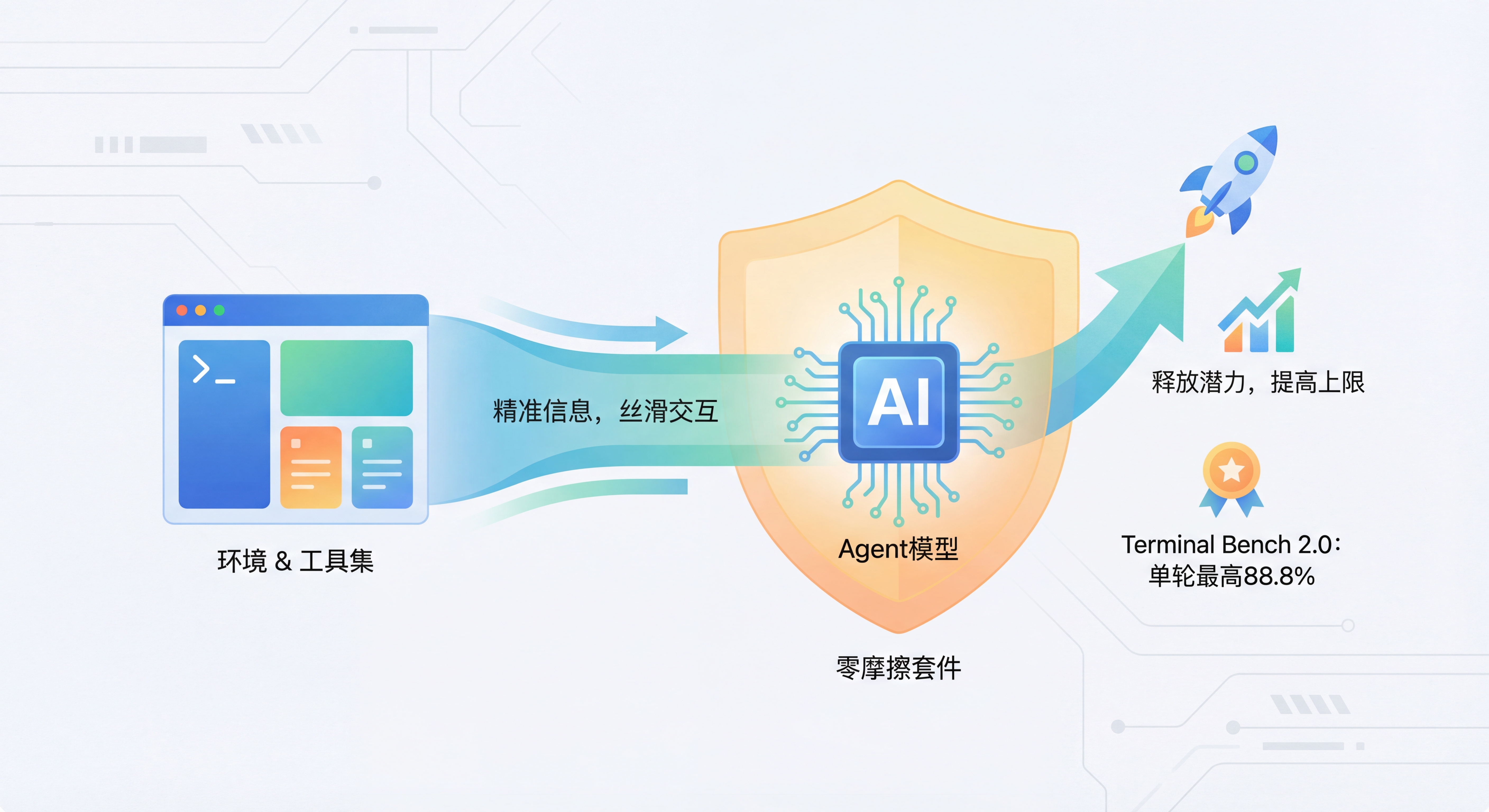

Zero-Friction Harness, Unleashing Model Potential

Different LLMs have different preferences when calling tools due to differences in training data and training methods. Tools that don't match LLM preferences will reduce call success rates.

On the other hand, providing precise environment information and toolsets to assist Agents in seamlessly interacting with the environment is crucial for unleashing model potential and raising the Agent ceiling.

TongCLI has accumulated extensive engineering experience, continuously optimizing Harness Design/Engineering, achieving a single-round score of up to 88.8% on Terminal-Bench 2.0.

Multi-Agent Architecture

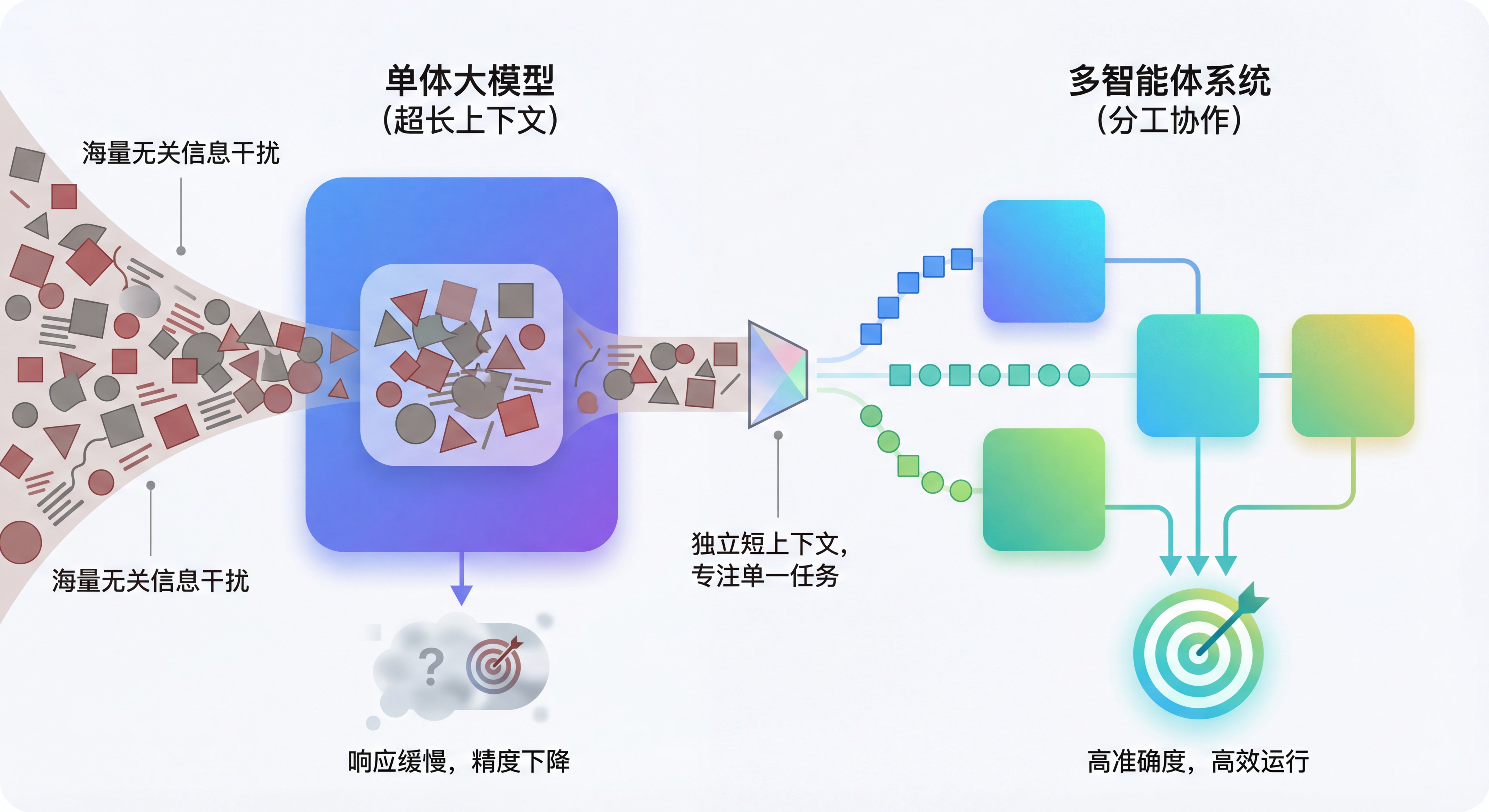

Avoiding Context Overload, Improving Agent Accuracy

Extensive research shows that as context length grows, LLM performance continuously degrades. When the context contains a large amount of information irrelevant to the current task, the LLM's responses will be severely disrupted.

Although most LLMs now support contexts up to 1M tokens, they are expensive, have high time and computational costs, and are only suitable for simple tasks like

needle in a haystack, performing poorly on complex tasks.

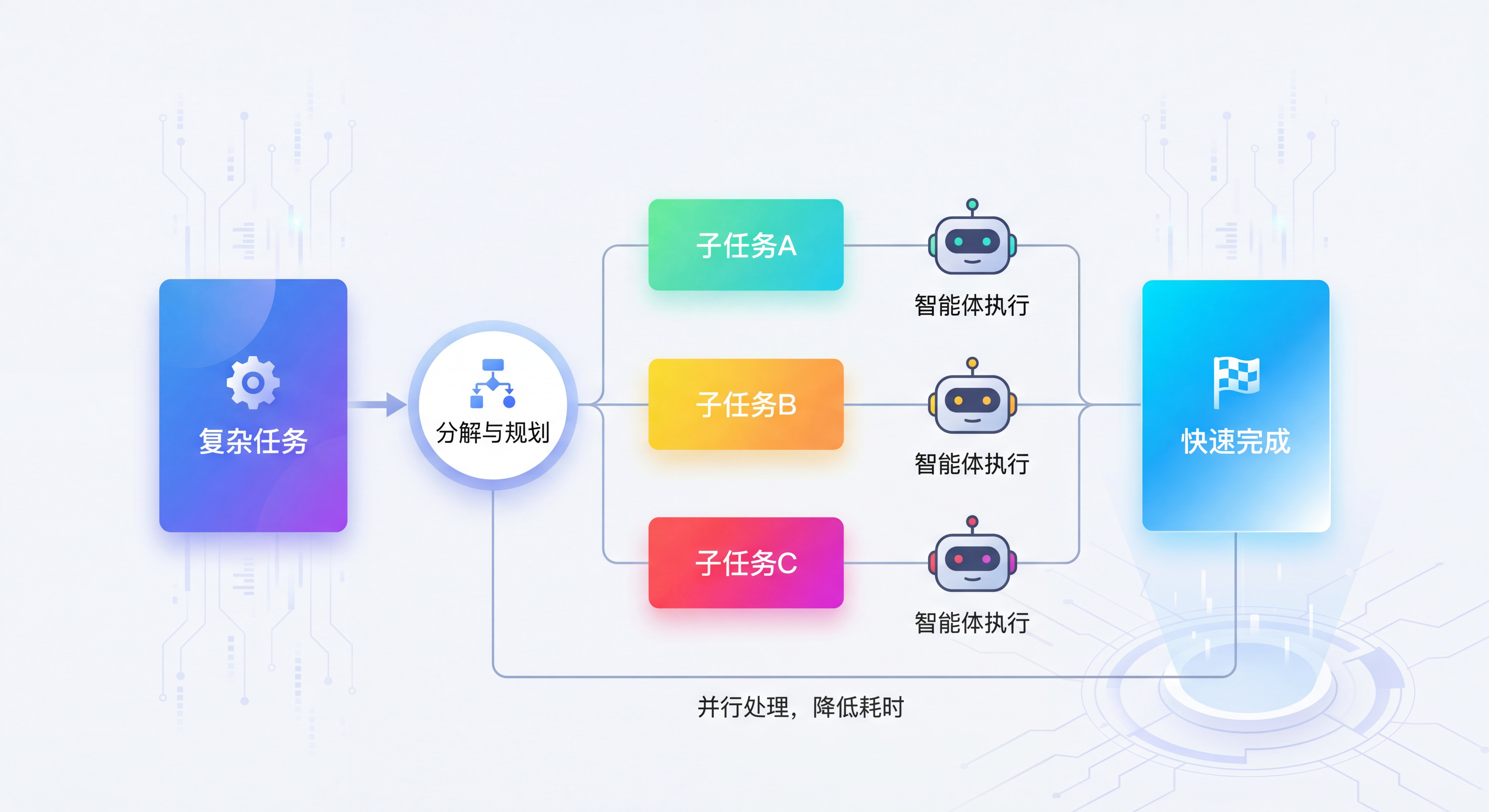

TongCLI trades the number of Agents for the context space of individual Agents, strictly limiting the steps and context length of each Agent, ensuring single-Agent precision.Parallel Subtasks, Reducing Task Duration

During the planning phase, TongCLI breaks down complex tasks into a directed acyclic graph (DAG) of subtasks, identifies subtasks that can be parallelized, and assigns them to different Agents for execution, achieving subtask parallelization.

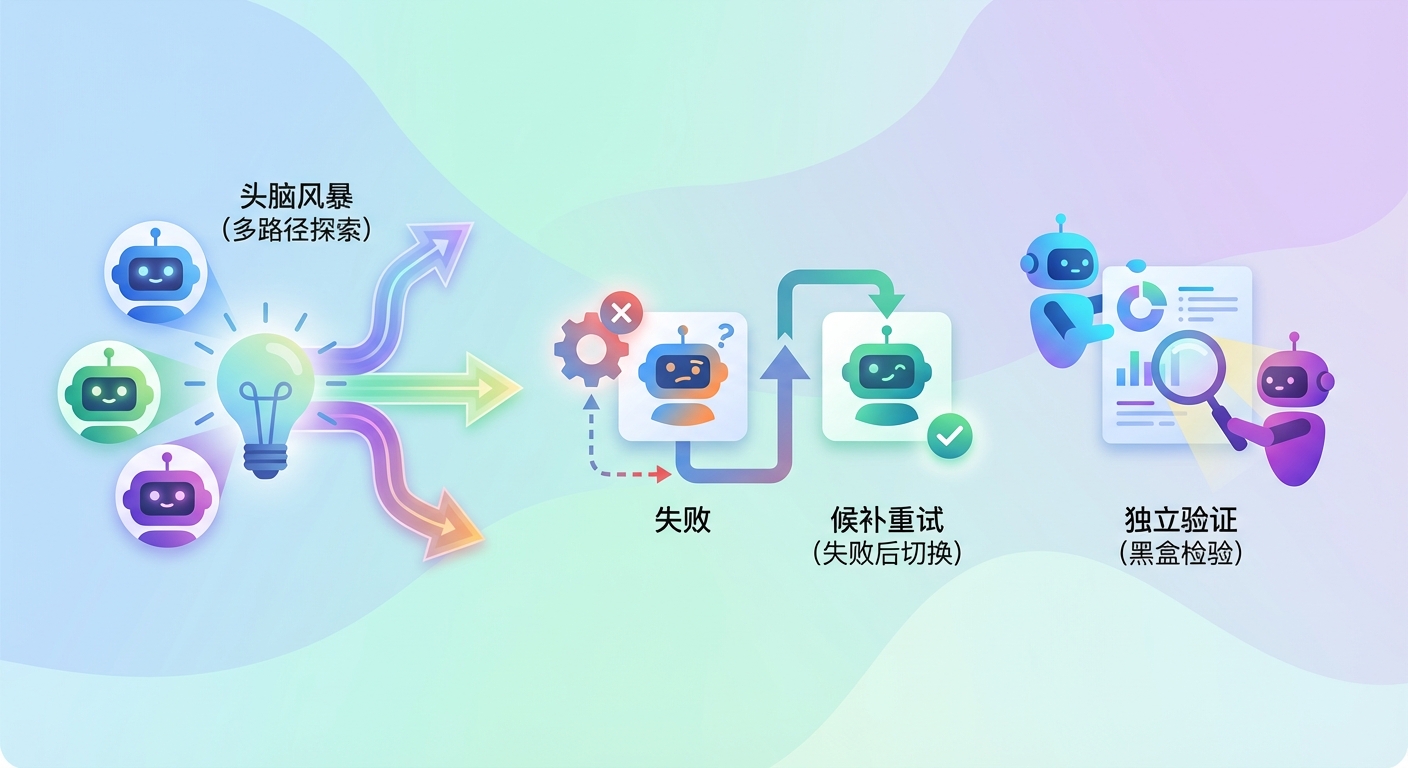

Brainstorming, Exploring Multiple Possibilities

Different LLMs excel in different domains. By introducing Agents equipped with different LLMs, TongCLI supports:

- Brainstorming: Agents equipped with different LLMs brainstorm together, broadening task execution paths

- Fallback retry: When a subtask execution fails, switch to an Agent equipped with a different LLM to retry

- Independent validation: Agents equipped with different LLMs perform black-box verification of results. This avoids the white-box validation and self-circular reasoning issues that occur when the same LLM validates, increasing the probability of the validation process discovering problems.